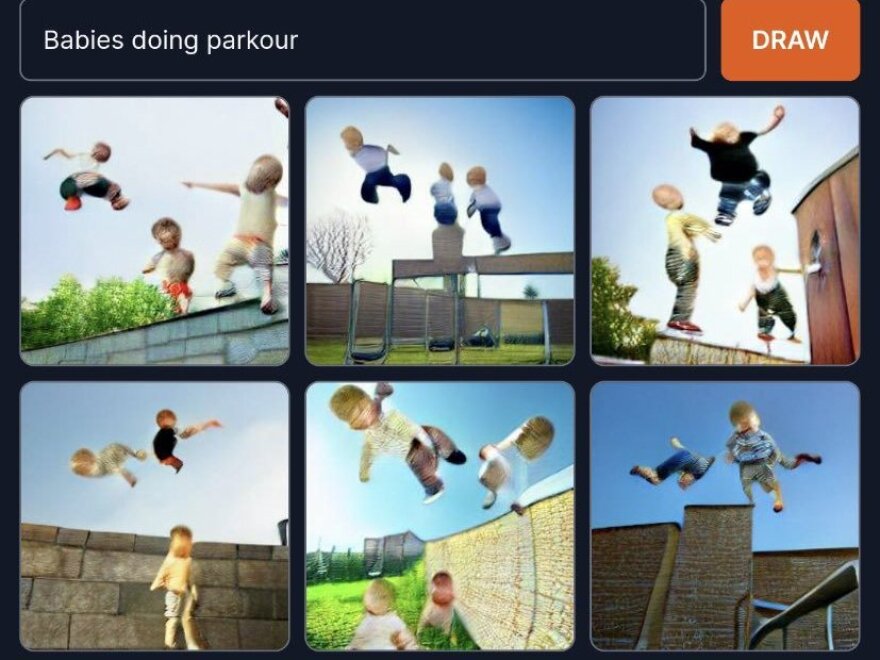

DALL-E mini is the AI bringing to life all of the goofy "what if" questions you never asked: What if Voldemort was a member of Green Day? What if there was a McDonald's in Mordor? What if scientists sent a Roomba to the bottom of the Mariana Trench?

You don't have to wonder what a Roomba cleaning the bottom of the Mariana Trench would look like anymore. DALL-E mini can show you.

DALL-E mini is an online text-to-image generator that has exploded in popularity on social media in recent weeks.

The program takes a text phrase — like "mountain sunset," "Eiffel tower on the moon," "Obama making a sand castle," or anything else you could possibly imagine — and creates an image out of it.

The results can be strangely beautiful, like "synthwave buddha," or "a chicken nugget smoking a cigarette in the rain." Others, like "Teletubbies in nursing home," are truly terrifying.

DALL-E mini gained internet notoriety after social media users started using the program to mash recognizable pop culture icons into bizarre, photorealistic memes.

Boris Dayma, a Texas-based computer engineer, originally created DALL-E mini as an entry in a coding competition. Dayma's program gets its name from the AI it's based on: Inspired by the artificial intelligence company OpenAI's incredibly powerful DALL-E, DALL-E mini is basically a web app that applies similar technology in a more easily accessible way. (Dayma has since renamed DALL-E mini to Craiyon at the company's request).

While OpenAI restricts most access to its models, Dayma's model can be used by anyone on the internet, and it was developed through collaboration with the AI research communities on Twitter and GitHub.

"I would get interesting feedback and suggestions from the AI community," Dayma told NPR over the phone. "And it became better, and better, and better" at generating images, until it reached what Dayma referred to as "a viral threshold."

While the images DALL-E mini produces can still look distorted or unclear, Dayma says it's reached a point where the images are consistently good enough, and it's reached a wide enough audience, that the conditions were right for the project to go viral.

Learning from the past, and a complicated future

While DALL-E mini is unique in its widespread accessibility, this isn't the first time AI-generated art has been in the news.

In 2018, the art auction house Christie's sold an AI-generated portrait for over $400,000.

Ziv Epstein, a researcher at the MIT Media Lab's Human Dynamics Group, says the advancement of AI image generators complicates notions of ownership in the art industry.

In the case of machine-learning models like DALL-E mini, there are numerous stakeholders to account for when considering who should get credit for creating a piece of art.

"These tools are these diffuse socio-technical systems," Epstein told NPR. "[AI art generation is a] complicated arrangement of human actors and computational processes interacting in this kind of crazy way."

First, there are the coders who created the model.

For DALL-E mini, that's primarily Dayma, but also members of the open-source AI community who collaborated on the project. Then there are the owners of the images the AI was trained on — Dayma used an existing library of images to tweak the model, essentially teaching the program how to translate text to images.

Finally, there's the user who came up with the text prompt — like "CCTV footage of Darth Vader stealing a unicycle" — for DALL-E mini to use. So it's hard to say who exactly "owns" this image of Gumby performing an NPR Tiny Desk concert.

when @nprmusic pic.twitter.com/0cndlsSz8z

— sofie hs 🤠 (@zerosuitsofie) June 22, 2022

Some developers also worry about the ethical implications of AI media generators.

Deepfakes, often convincing applications of machine-learning models to render fake images of politicians or celebrities, are a top concern for software engineer James Betker.

Betker is the creator of Tortoise, a text-to-speech program that implements some of the latest machine-learning techniques to generate speech based on a reference voice.

Initially starting Tortoise as a side project, Betker said he's not motivated to continue developing it due to its possible misuse.

"That's what I'm absolutely concerned about — people trying to get politicians to say things that they didn't actually say, or even making affidavits that you take to court ... [that are] completely faked," Betker told NPR.

But the accessibility of open-source AI projects like Dayma's and Betker's have also produced some positive effects. Tortoise has given developers who can't afford hiring voice actors a way to create realistic voice-overs for their projects. Similarly, Dayma said small businesses have used DALL-E mini to generate graphics when they couldn't afford hiring a designer.

The increasing accessibility of AI tools might also help people familiarize themselves with the potential threats of AI-generated media. To Dayma and Betker, the accessibility of their projects makes it clear to people the rapidly advancing capabilities of AI and its ability to spread misinformation.

MIT's Epstein said the same: "If people are able to interact with AI and kind of be a creator themselves, in a way it kind of inoculates them, maybe, against misinformation."

Copyright 2022 NPR. To see more, visit https://www.npr.org.